LiteLLM – what is it?

2026-04-14

De Novo Cloud Expert

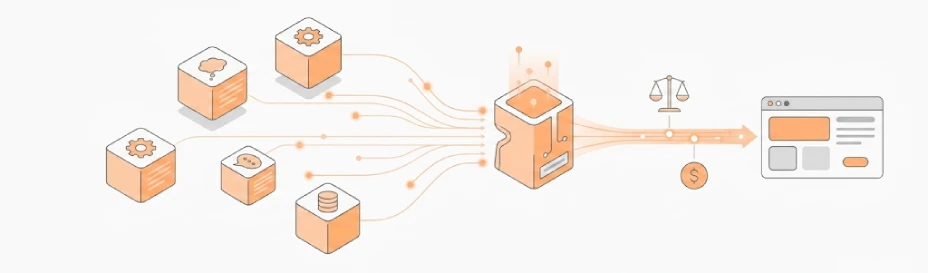

LiteLLM is a library and proxy gateway for unified access to a wide range of models and providers through a single OpenAI-compatible interface. The solution can be used as a software development kit (SDK) within an application or as a standalone service that standardizes model calls regardless of the underlying provider. The official documentation explicitly states support for over 100 models and providers.

In multi-provider architectures, LiteLLM is used as an intermediary layer between application logic and models to centrally track costs, enforce budgets for users or virtual keys, and simplify switching between providers. This approach is particularly valuable in environments that require governance, standardization, and control over operational model usage.

Additionally, LiteLLM supports load balancing across multiple proxy instances, which is important for Kubernetes environments and scalable AI services. The documentation also describes mechanisms for distributing RPM/TPM via Redis and the ability to build a proxy layer that acts as a single access point to multiple models within a production infrastructure.