What is Machine Learning?

2024-05-17

In a period of rapid digital transformation, machine learning is becoming the force that enables organizations to harness the full, enormous potential of data. Machine learning (ML) algorithms have a strong ability to learn, detect patterns, make predictions, and automate decision-making.

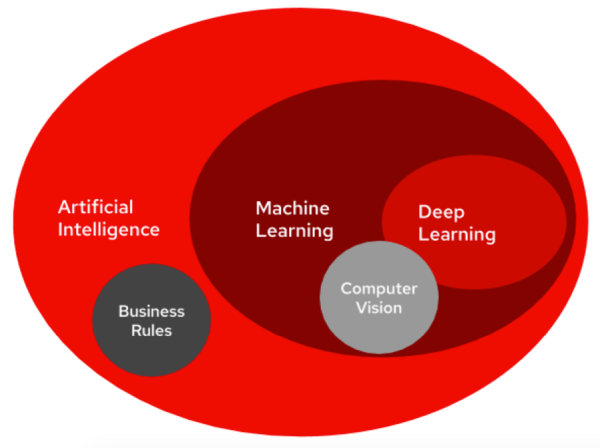

Machine Learning (ML) is a class of Artificial Intelligence (AI) methods that combines approaches and technologies to create algorithms capable of learning from data on their own. Unlike traditional approaches, where computing systems are explicitly programmed to perform specific tasks, ML models can improve their performance over time by analyzing data and discovering patterns within it. This allows ML systems to solve problems that are difficult or impossible to program manually.

Machine Learning methods

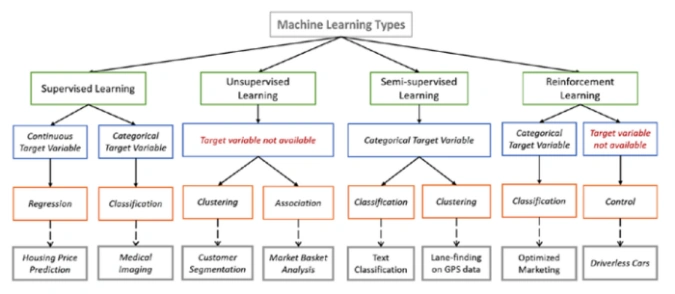

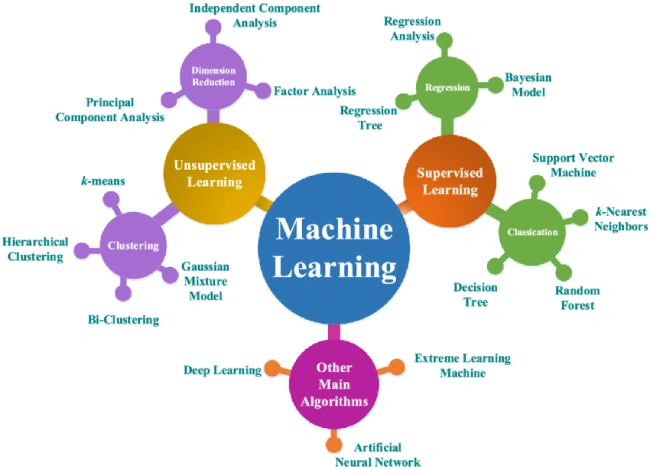

There are many different types of ML algorithms, each suited to solving specific tasks. The most common types of Machine Learning include:

- Supervised learning. The algorithm is trained on a dataset where each example has a label indicating its class.

- Unsupervised learning. The algorithm is trained on a dataset that has no labels.

- Semi-supervised learning. The algorithm is trained on a dataset that contains both labeled and unlabeled examples.

- Reinforcement Learning (RL). The algorithm learns to perform tasks by receiving a “reward” or a “penalty” for its actions.

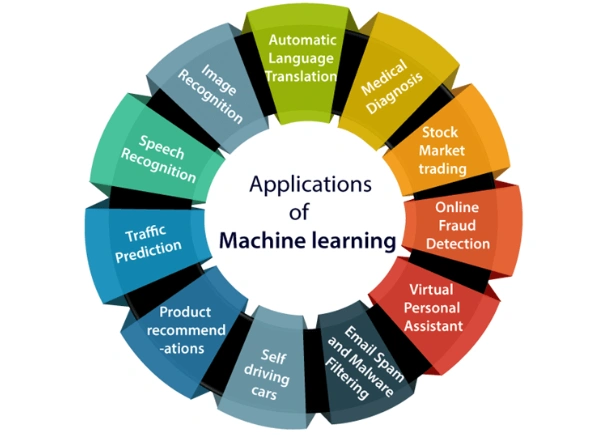

Below, we will examine these methods in more detail. Note as well that today ML is actively used across many sectors of the economy; here are just a few examples.

- Healthcare. For disease diagnosis, drug discovery, and medical image analysis.

- Finance. For fraud detection, risk assessment, and asset management.

- Marketing. For ad personalization, customer targeting, and analysis of customer and buyer data.

- Manufacturing. For demand forecasting, production process optimization, and quality control.

- Robotics. For developing autonomous transport and controlling various types of robots.

Today, Machine Learning technologies are developing extremely fast, and their potential for solving a wide range of problems is enormous. Going forward, it is reasonable to expect ML to influence most areas of our lives. But before moving to a more detailed review of ML approaches and methods, let us take a brief excursion into its history.

A brief history of ML

The idea of machine learning emerged around the middle of the last century.

One of the pioneers of the field was the famous English scientist Alan Turing, who in his 1950 paper “Computing Machinery and Intelligence” described the possibility of creating computing machines capable of “learning.”

In the 1960s, the first machine learning algorithms based on statistical methods were developed.

In the 1970s came the era of symbolic AI, when computers “learned” from rules and logic.

In the 1980s, interest grew in neural networks, whose operation is based on fundamental principles of how the brain functions.

In the 1990s, machine learning experienced a breakthrough thanks to the development of algorithms capable of processing massive volumes of data, as well as the emergence of relatively affordable computing power, which enabled many research groups and companies worldwide to work with ML models.

In the 2000s, machine learning became one of the most dynamic areas of AI due to increasing computational power and the development of deep learning algorithms such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs).

The 2010s can be described as the period when deep learning flourished and was applied across many domains, including computer vision, Natural Language Processing (NLP), and robotics. In particular, between 2013 and 2015 there was a surge of interest in ML from both business and academia. A large number of new ML applications emerged in areas such as finance, healthcare, and manufacturing. Popular ML platforms—TensorFlow and PyTorch—also appeared, making the development and deployment of ML models more accessible.

Between 2015 and 2018, ML became a key component of AI, stimulating further research and development. Breakthroughs occurred in NLP: neural network–based ML algorithms demonstrated strong progress in tasks such as machine translation and chatbots.

From 2020–2021 through the current year 2026, collaborative learning technologies have been actively developing, enabling multiple ML models to learn from one another and improve overall performance. Supported by a new, powerful hardware base, neuromorphic computing (whose principles are based on modeling processes of the human brain) received a significant boost. Quantum machine learning is also developing, exploring the potential of quantum computing to accelerate Machine Learning algorithms.

In recent years, ML from the cloud — Machine Learning Cloud — has also been actively advancing, where model training is performed using the resources of a powerful commercial cloud provider. Now, after this short historical overview, we will move directly to the most relevant machine learning methods used today.

Supervised learning

Also known as supervised learning, this is the most common type of machine learning. An ML algorithm is trained on a dataset where each example has a label. The label describes the desired outcome for that example. Based on this data, the algorithm learns to map input data to output labels.

There are many types of supervised learning algorithms, including:

- Linear regression. Used to predict a numeric value based on one or more inputs.

- Logistic regression. Used to classify data into categories.

- Decision trees. Used to make decisions based on a set of rules.

- K-Nearest Neighbors (KNN). Used to classify data based on similarity to other data points in the dataset.

- Support Vector Machines (SVM). Used for classification and regression.

Unsupervised learning

Also called unsupervised learning. This is a type of machine learning where the algorithm is trained on an unlabeled dataset. The goal is to independently find patterns and identify structures in the data, group objects by similar characteristics, and potentially make predictions about how the situation may evolve.

Unsupervised learning is often used for grouping data into clusters with similar characteristics (clustering), reducing the number of features without losing information (dimensionality reduction), and identifying unusual or outlier data (anomaly detection).

Unsupervised learning algorithms include:

- K-means. A clustering algorithm that divides data into a predefined number of groups.

- Approximate k-means. A more efficient variant of the previous algorithm that can handle large datasets.

- Principal Component Analysis (PCA). A dimensionality reduction method that selects the most informative features in datasets.

A combination of the two approaches above (supervised and unsupervised) is also possible. This combination can conditionally be called “semi-supervised learning” (or mixed learning).

In this case, the algorithm is trained on a dataset that contains both labeled and unlabeled features. Labeled examples are used to train the algorithm as in supervised learning, while unlabeled examples are used to discover patterns in the data as in unsupervised learning.

Semi-supervised learning can be effective in situations where there is a small labeled dataset and a large unlabeled dataset—for example, during self-training or collaborative learning of ML models.

Reinforcement Learning

Reinforcement Learning (RL) is a type of machine learning in which an algorithm learns through interaction with an environment. Unlike other ML methods, where the algorithm learns from labeled datasets, an RL algorithm receives “reinforcement”—a “reward” or a “penalty”—for outcomes, and over time learns to choose actions that maximize reward.

Advantages of RL:

- Ability to self-learn. Such models do not require a large labeled dataset.

- Effectiveness in complex tasks. RL models can be used for tasks that are difficult or impossible to solve using other machine learning methods.

- Adaptability. Algorithms can adapt to new conditions and tasks.

Deep Learning

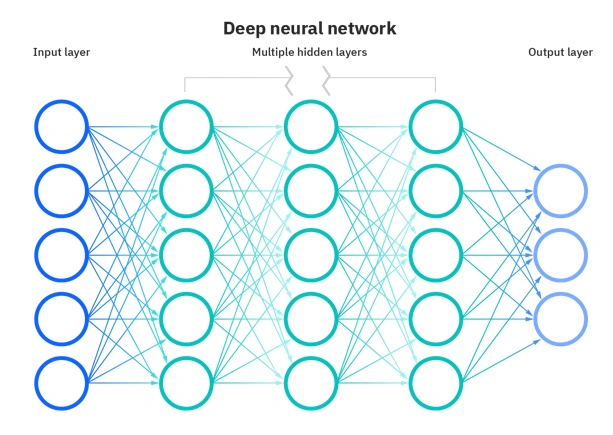

Deep Learning is a subset of machine learning algorithms and methods that uses artificial neural networks to solve problems.

Artificial neural networks are mathematical models built on principles similar to biological neural networks (for example, the human brain). They consist of multi-layer networks of artificial neurons—special nodes (processors), each performing simple computations. Each neuron receives signals from other neurons, processes them, and produces an output signal. Information passes through the layers of the neural network, where at each stage it is transformed and analyzed. Thanks to multi-layer architecture, Deep Learning can uncover complex patterns in massive datasets and solve AI tasks that previously seemed unattainable.

There are several main types of deep learning:

- Convolutional Neural Networks (CNNs). These networks analyze input data (images, video) in parts using small “filters.” Each filter focuses on specific features such as shapes, colors, or textures. By passing data through filters, CNNs form a hierarchy of features that enables recognition and categorization of objects and discovery of patterns in visual data. CNNs are effective for pattern recognition and for processing images and video.

- Recurrent Neural Networks (RNNs) process sequential data (text, speech) while taking context into account. Information from previous elements of the sequence influences how the RNN interprets the current element (overall, this is similar to a dialog model between people). RNNs are an ideal solution for tasks where order and context matter. They are well suited for natural language processing, speech understanding, and machine translation.

- Transformers are a newer neural network architecture (introduced in 2017) that brought natural language processing to a completely new level of efficiency. Unlike RNNs, which process data sequentially, transformer models can simultaneously “see” all elements of a sequence and their relationships. This makes transformers efficient and fast for tasks involving long texts.

Deep Learning makes it possible to solve tasks that were previously unattainable (or very difficult) for computing systems—for example, image and speech recognition, “understanding” human language, and generating appropriate answers to questions asked by humans.

What business problems can Machine Learning and Artificial Intelligence solve?

Machine Learning (ML) and Artificial Intelligence (AI) are powerful tools that can help businesses in many ways—for example, by automating routine tasks, improving process efficiency, enhancing customer service, developing new products and services, and generating valuable recommendations through analysis of massive volumes of data.

Here are some examples of how Machine Learning and AI can be used to address business needs:

- Automation of repetitive tasks such as data entry, document processing, and customer support. This frees employees’ time for more complex work.

- Business process optimization. ML/AI can analyze data to identify inefficiencies and optimize processes, increasing company productivity.

- Service personalization. ML/AI can analyze customer data to provide personalized offers, recommendations, and support.

- Automation of answers to questions and prediction of customer needs.

- Detection of fraudulent transactions and cybersecurity.

- Risk management.

Practical examples of Machine Learning

Today, machine learning is widely used in many areas of life—from medicine and finance to marketing and manufacturing. Consider a few examples that can help you better understand how this technology can be applied to real-world problems.

- Amazon, Netflix, Spotify. Use ML to recommend services to customers based on personal purchase history, viewing history, preferences, and other data.

- Google, Facebook, X (formerly Twitter). Use ML to show users the most relevant ads based on their interests, search history, demographic data, and social media behavior.

- Virtual assistants such as Apple Siri, Amazon Alexa, and Google Assistant. Use ML to understand natural language and fulfill user requests.

- IBM Watson. Uses ML to analyze medical images such as X-rays and MRI scans to support doctors in diagnosing diseases.

- Verily. Uses ML to develop algorithms that can diagnose diseases based on patient data such as symptoms, medical history, and test results.

- Walmart. Uses ML to forecast product demand, helping the company optimize inventory and production.

- Uber. Uses ML to predict ride demand, helping the company optimize driver allocation.

These are just a few examples of how machine learning is used in business.

Today, ML technologies and algorithms are becoming increasingly powerful and productive, enabling even more complex problems to be solved. Companies that can use machine learning effectively will gain a meaningful competitive advantage in the market. At the same time, it is important to remember that Machine Learning is not a magic wand. For these technologies to be effective, high-quality data, skilled specialists, and a well-designed implementation strategy are required. The combination of these factors significantly increases the likelihood of using ML technologies successfully.