How NVIDIA AI Enterprise is changing the game in AI and ML

2025-04-29

NVIDIA AI Enterprise is an enterprise platform for the full lifecycle of AI/ML workloads. It combines optimized libraries, tools, and services to deliver scalability, stability, and support. Let's take a look at the platform's architecture, features, and benefits, as well as a step-by-step approach to implementation.

Artificial intelligence is becoming a critical resource in the digital economy. Companies are eager to implement AI-based solutions to improve operational efficiency, automate processes, and gain competitive advantages. The implementation of AI technologies, especially in complex infrastructures, often faces compatibility, continuity, and support issues. These factors were the starting point for NVIDIA AI Enterprise, an integrated platform that simplifies the creation, deployment, and maintenance of AI/machine learning (ML) solutions. It is a modern tool for projects of any complexity, from pilot POCs to large-scale implementations.

NVIDIA AI Enterprise Overview

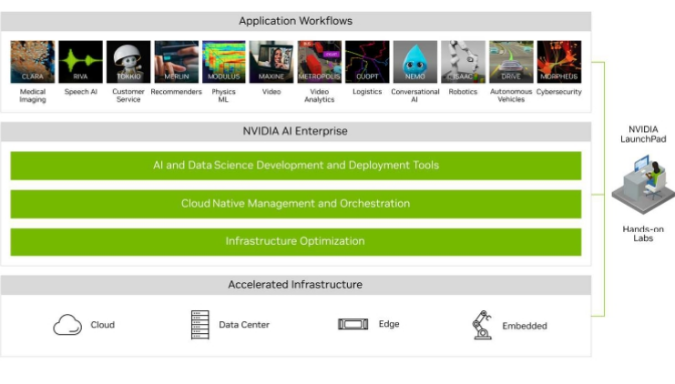

NVIDIA AI Enterprise is a commercial platform with enterprise-level support that enables companies to create, deploy, and manage AI/ML workloads on infrastructures of any complexity, from local data centers to the cloud. It is designed with a focus on stability, security, scalability, and resource optimization. The platform integrates more than 50 NVIDIA frameworks, libraries, and solutions that accelerate innovation in computer vision, natural language processing, generative AI, and deep learning.

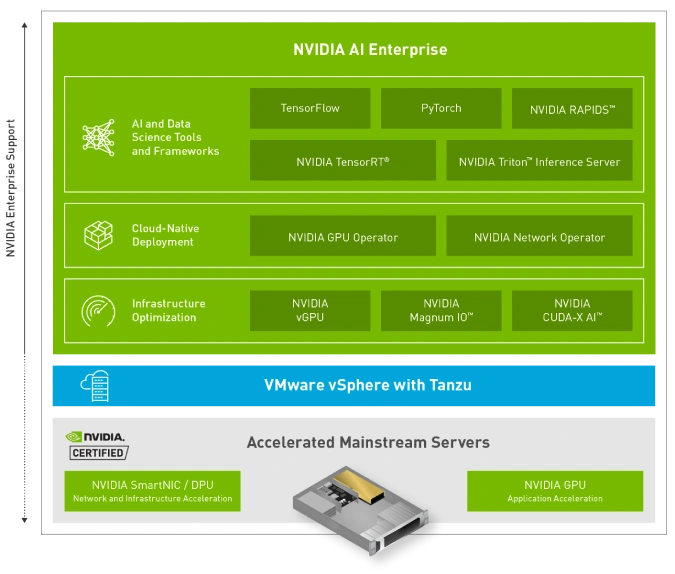

Its components include NVIDIA GPU drivers, optimized runtime environments (such as TensorRT or Triton Inference Server), container tools (NVIDIA Container Toolkit), RAPIDS libraries for data processing, and numerous examples and SDKs. This suite enables companies to significantly reduce development costs and time to market. Thanks to integration with platforms such as VMware vSphere, Red Hat OpenShift, and AWS, Azure, and Google Cloud cloud environments, AI Enterprise ensures seamless operation in hybrid and multi-cloud scenarios.

NVIDIA AI Enterprise is actively developing as a strategic part of the NVIDIA corporate ecosystem, offering support not only for technologies, but also for technical documentation, security updates, regular releases, and specialized support. This makes it an attractive choice for teams working in healthcare, finance, retail, logistics, and manufacturing, where the reliability and performance of AI solutions are paramount.

What is NVIDIA AI Enterprise?

From a technical standpoint, NVIDIA AI Enterprise is a licensed software stack that provides access to a set of software optimized for use with NVIDIA GPUs in virtualized environments. It is based on support for the TensorFlow, PyTorch, XGBoost, and RAPIDS frameworks, as well as pre-built containers from the NVIDIA NGC (NVIDIA GPU Cloud) Docker repository. This allows developers and data scientists to run training, inference, and data analysis tasks faster and more reliably, without the need for complex environment configuration.

A key feature is integration with enterprise environments, including the VMware virtualization platform. With VMware vSphere certification, NVIDIA vGPU enables the deployment of multiple isolated AI instances on a single physical GPU, significantly improving resource utilization. Other supported Nvidia AI cloud environments include Red Hat OpenShift, SUSE Rancher, Ubuntu, as well as Amazon EC2 G5, Azure NC, and GCP A2 clouds.

In addition to the components themselves, AI Enterprise includes business-critical features: SLA-level technical support, security updates, patches, and stable versions of environments for productive operation. It is not just a set of libraries, but a full-fledged platform that allows you to transform AI development into a manageable business process.

How does NVIDIA AI Enterprise work?

NVIDIA AI Enterprise functions as a multi-level technology stack that covers all stages of the AI/ML lifecycle — from data preparation to model deployment in a productive environment. The platform is based on GPU drivers that provide low-level interaction with hardware. On top of this layer are CUDA, cuDNN, and other computing environments that enable the efficient use of NVIDIA resources for model training and inference.

Tools for managing the environment include NVIDIA Docker Runtime, Kubernetes support with GPU operator, integration with Red Hat OpenShift, and Helm charts for rapid deployment of components. Components such as Triton Inference Server enable high-performance inference with support for multiple frameworks simultaneously, as well as dynamic load scaling. The Triton tool supports features such as A/B testing of models, request routing, and performance monitoring using Prometheus and Grafana.

For model training, NVIDIA AI Enterprise offers integration with NGC Workspaces, development environments with preconfigured environments where you can run Jupyter Notebook, train models on cloud (Nvidia cloud AI) or local infrastructure, perform hyperparameter optimization and experiments. When running workloads in virtualized environments such as VMware, GPU resources can be efficiently distributed, making the platform suitable even for organizations with limited hardware resources.

Overview of NVIDIA AI Enterprise components and features

The NVIDIA AI Enterprise architecture is built around the principles of modularity and optimization for a productive environment. Among the key components of the Nvidia AI platform is the NVIDIA CUDA Toolkit, a basic platform for parallel computing that provides access to GPU computing capabilities. Other important elements include cuDNN (deep learning), NCCL (network interface for distributed computing), DALI (image preprocessing), and RAPIDS, a set of libraries for working with data in the style of pandas, but with hardware acceleration.

In the inference segment, the platform is complemented by Triton Inference Server, a powerful service capable of processing models created in TensorFlow, PyTorch, ONNX, XGBoost, and others. Its functionality allows it to process requests in real time, supports dynamic model loading, and optimizes computations using TensorRT. This tool makes it possible to achieve minimal response latency under high load without losing model accuracy.

For deployment automation, NVIDIA offers Helm charts and GPU Operator, which allow you to quickly and reliably install all the necessary components in a Kubernetes environment. In addition, users can take advantage of ready-made Docker images from NVIDIA NGC, which are regularly tested for compatibility and performance. This greatly simplifies CI/CD processes, ensures environment reproducibility, and reduces the risks associated with updates.

In the field of natural language processing and computer vision, NVIDIA AI Enterprise includes pre-trained models and SDKs, including TAO Toolkit for transfer learning, NeMo for working with language models, and DeepStream for video analytics. These tools are aimed at both researchers and engineers who want to integrate AI into their applications without writing code from scratch. The models can be customized for specific business requirements and easily adapted to new datasets.

The platform also integrates with leading monitoring and logging systems—Prometheus, Grafana, and Fluentd—allowing organizations to establish a complete cycle of monitoring the performance of models and the entire AI infrastructure. This is critical for meeting SLA requirements and compliance with security standards.

What are the benefits of NVIDIA AI Enterprise for AI and ML?

One of the key benefits of NVIDIA AI Enterprise is its ability to reduce the technical complexity of AI implementation. With pre-tested components and certified infrastructure, this platform significantly reduces implementation costs and accelerates time to results. Organizations gain access to tools that work out of the box and can start experimenting with AI projects without deep knowledge of DevOps or GPU infrastructure.

For development and research teams, environment stability is of great importance. NVIDIA AI Enterprise provides repeatable results, version control, component compatibility support, and predictable performance. This makes it a convenient platform for both the R&D phase and large-scale production deployment. It is also important that NVIDIA provides SLA-level support, which is critical for organizations with high requirements for service continuity.

Another significant advantage is the scalability of Nvidia AI. The platform is equally well suited for small AI projects on a single machine and for distributed tasks on a cluster of GPU servers. This allows you to start small and then expand your resources without having to completely change your stack. Integration with VMware, Kubernetes, and clouds provides the flexibility required for modern AI infrastructure.

Finally, an important advantage is access to the NVIDIA NGC ecosystem: thousands of optimized models, examples, tools, and guides. This not only speeds up the start, but also allows you to learn from examples, scale your team's knowledge, and leverage industry best practices.

How to get started with NVIDIA AI Enterprise?

The first step is to determine the type of infrastructure you plan to use: on-premises, virtualized, or cloud. NVIDIA Enterprise AI supports both bare metal servers and virtualized environments (VMware vSphere, Red Hat OpenShift), as well as public clouds. The choice of environment affects the deployment method: in the case of Kubernetes, through Helm charts; in VMware, through vSphere Plugin or vGPU profiles.

The next step is to obtain an NVIDIA AI Enterprise license, which provides access to certified builds, documentation, support services, and regular updates. The product can be purchased through authorized partners or directly from NVIDIA. We also recommend using the NVIDIA LaunchPad portal, a free test environment where you can explore the platform's capabilities in a live demo format.

Deployment usually begins with the installation of drivers, CUDA, and necessary libraries. This is followed by integration with monitoring systems, preparation of the CI/CD environment, and developer tools (NGC CLI, JupyterHub, MLflow). Optionally, you can configure load balancing, performance testing, and automatic model scaling in Triton Server.

Once the basic configuration is complete, teams can start training their own models or adapt ready-made ones from the NGC catalog. If support is needed, NVIDIA consulting and the user community are available. Thus, AI Enterprise is a platform that not only simplifies launch but also provides a complete lifecycle for AI solutions, from idea to implementation. Companies can quickly move from a research prototype to a scalable production system with minimal risk and support costs. With its clear positioning in the enterprise AI ecosystem, it provides a reliable foundation for digital transformation and long-term business growth.

Summary

NVIDIA AI Enterprise is not just a technology product, but a strategic tool for accelerating the adoption of artificial intelligence in business processes. With its flexible architecture, broad framework support, and certification for leading virtualization and cloud platforms, it significantly lowers the barrier to entry into AI and allows you to focus on creating value rather than infrastructure issues. For companies seeking to be at the forefront of technological development, NVIDIA AI Enterprise offers stability, scalability, and full support at every stage. This solution is ideal for teams that value control, performance, and long-term reliability in the field of artificial intelligence.